- Industries

Industries

- Functions

Functions

- Insights

Insights

- Careers

Careers

- About Us

- Technology

- By Omega Team

Across factories, smart cities, homes, and even distant planets, autonomous navigation is transforming how intelligent machines perceive and interact with their surroundings. These systems are evolving beyond pre-programmed responses, developing the ability to make real-time decisions, adapt to dynamic environments, and move with remarkable independence. This article dives into the foundational technologies fueling this shift, explores diverse real-world applications, addresses critical challenges, and highlights the future trends steering the evolution of mobile intelligent systems. As robotics becomes more deeply embedded in our daily lives, autonomous navigation emerges as a pivotal force driving smarter automation, enabling safer operations, and opening new possibilities for exploration and human-machine collaboration.

What is Autonomous Navigation?

Autonomous navigation is the capability of a robot or vehicle to independently perceive its surroundings, determine its location, plan a safe and efficient path, and move from one point to another without human intervention. By integrating real-time perception, mapping, and control, it mimics human-like decision-making and enables intelligent movement through complex environments. What distinguishes autonomous navigation is its adaptability: robots can dynamically adjust their routes, avoid unexpected obstacles, and operate effectively in ever-changing settings like crowded warehouses, urban streets, or rugged outdoor terrains. This technology relies on a combination of advanced sensors, AI algorithms, and onboard computing to interpret and respond to the world around it. As autonomous systems continue to evolve, they are playing an increasingly vital role in sectors like logistics, agriculture, healthcare, and defense. Ultimately, autonomous navigation is not just a technical milestone but a foundational pillar for building smarter, more responsive machines that coexist and collaborate with humans.

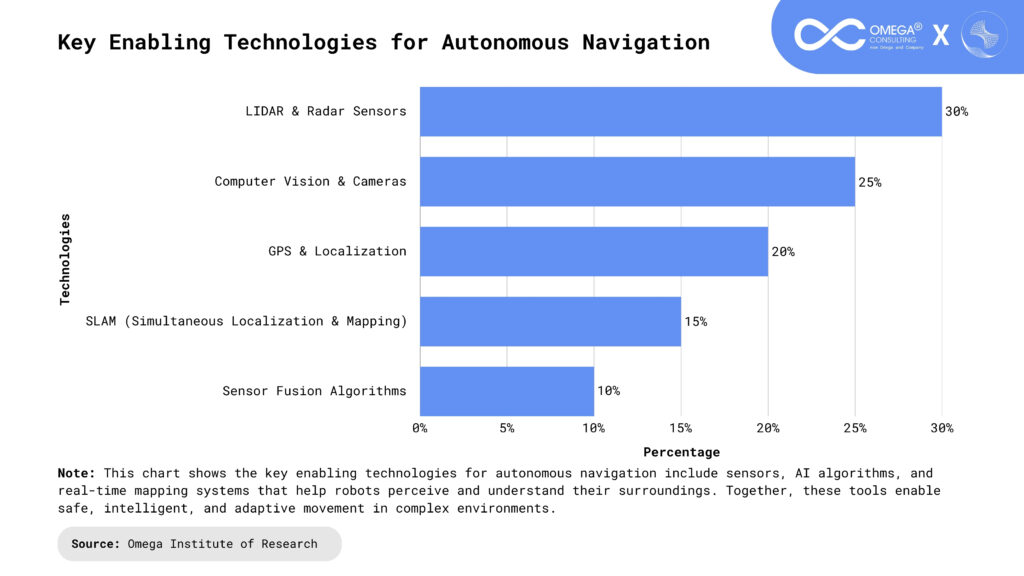

Core Technologies Behind Autonomous Navigation

To navigate autonomously, robots rely on a suite of sophisticated technologies that work together to simulate awareness, intelligence, and mobility.

Perception Systems: Perception systems are the foundation of a robot’s ability to “see” and interpret its environment. These include LiDAR, stereo cameras, radar, ultrasonic sensors, and infrared detectors. They gather raw environmental data such as distance, depth, texture, and motion. This information is processed using AI and computer vision to classify objects and detect changes in real-time. Advanced perception is crucial for obstacle detection, navigation in low-light areas, and safety in human environments.

Localization and Mapping (SLAM): SLAM allows a robot to construct a map of an unknown environment while determining its location within that space. It fuses data from GPS, IMUs, LiDAR, and visual sensors to build dynamic spatial awareness. SLAM systems enable continuous updates to both the map and the robot’s estimated position. This is essential for autonomous operation in GPS-denied areas such as indoor buildings or underground tunnels.

Modern SLAM algorithms can now integrate semantic information, helping robots understand not just where things are but what they are.Path Planning Algorithms: Path planning algorithms help robots choose the safest, most efficient path to their goal by evaluating possible routes and avoiding obstacles. Algorithms like A*, D*, and RRT plays a critical role. They factor in dynamic elements, like moving humans or changing terrain, to re-calculate paths in real time. Path planning ensures that navigation is not only possible but optimized for energy use and time. AI-driven planning systems are now learning to prioritize smoother, safer, and more human-like motion.

Machine Learning and Artificial Intelligence: AI enables robots to interpret sensory data, make autonomous decisions, and adapt to new scenarios using machine learning. Techniques like reinforcement learning allow continuous self-improvement. Robots can learn from historical data, simulate actions before taking them, and generalize behavior to unseen environments. AI also improves anomaly detection and failure prediction during navigation tasks. This intelligence is key to operating in unpredictable or human-populated environments where rules aren’t fixed.

Control Systems: Control systems translate navigation plans into physical movement by managing motors, wheels, and actuators with precision. They ensure the robot stays on track, adjusts for drift, and reacts to feedback. Closed-loop control systems use real-time sensor input to correct trajectory errors instantly. Stability, responsiveness, and efficiency are achieved through advanced control theories like PID, MPC, or fuzzy logic. Without robust control, even the best navigation plans can fail to produce smooth and safe movement.

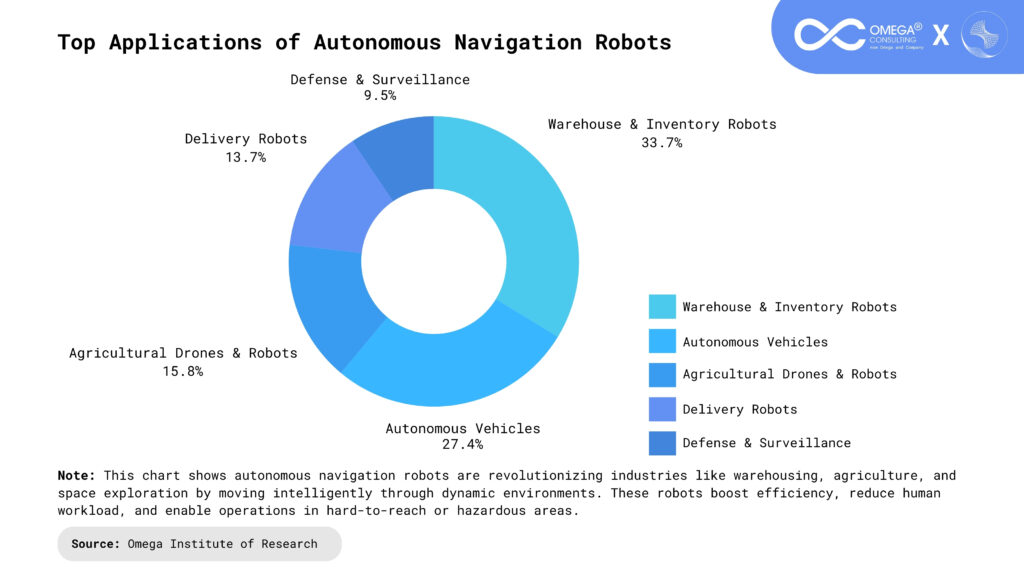

Applications of Autonomous Navigation in the Real World

The impact of autonomous navigation extends across industries, transforming traditional processes and introducing new capabilities in mobility and automation.

Self-Driving Vehicles: Self-driving cars combine AI, GPS, LiDAR, and cameras to perceive traffic, interpret road signs, and navigate safely without human input. They continuously predict the motion of nearby vehicles, cyclists, and pedestrians to make safe decisions. Autonomous vehicles are being tested in urban and rural environments to improve reliability across diverse conditions. They have the potential to reduce traffic congestion, emissions, and the human cost of driving errors.

Warehouse and Industrial Robots: AMRs in warehouses navigate complex layouts to pick, sort, and deliver items autonomously. They avoid humans, adapt to inventory changes, and communicate with centralized systems. These robots increase efficiency, reduce walking time for workers, and support just-in-time inventory models. They are scalable and can be rapidly deployed in new warehouses without reprogramming the entire layout. With AI integration, AMRs can optimize delivery paths and predict demand fluctuations.

Drones and UAVs: Autonomous drones use GPS, onboard cameras, and AI to navigate and perform tasks like aerial mapping, crop monitoring, and inspection. They adjust their flight paths to avoid obstacles like trees, power lines, or changing weather conditions. In disaster zones, drones provide rapid situational awareness without risking human responders. Their mobility and reach make them indispensable in remote sensing and critical infrastructure monitoring.

Space Exploration Rovers: Planetary rovers use autonomous navigation to explore alien terrains with minimal real-time guidance from Earth. Their SLAM systems are tuned to work in harsh, uneven terrains with limited visibility and delayed communication. They autonomously decide where to go, what samples to collect, and how to avoid hazards like rocks or cliffs. This independence allows extended exploration missions and higher data return with lower human overhead.

Healthcare and Service Robots: In hospitals, autonomous robots carry supplies, medications, or meals across floors and departments, reducing staff burden. They can detect crowded areas, reroute paths, and operate elevators autonomously. In hospitality, robots provide concierge services or room deliveries, enhancing guest experience. These robots reduce physical contact, improve hygiene, and free up human staff for higher-priority tasks.

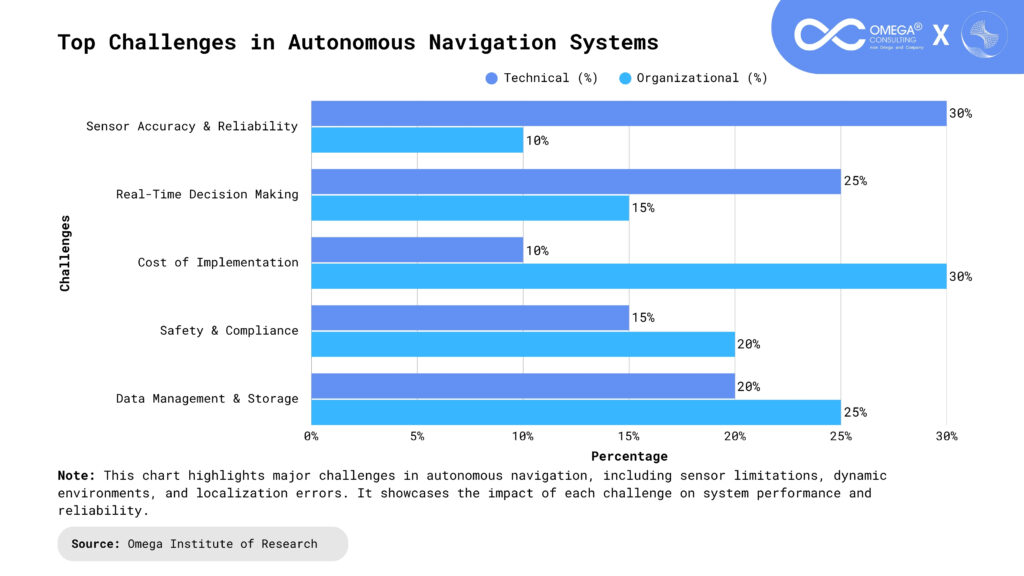

Challenges in Autonomous Navigation

Despite significant progress, several barriers limit the widespread deployment of autonomous navigation systems.

Dynamic and Unstructured Environments: Environments filled with unpredictable elements such as people, animals, weather changes, or moving vehicles pose serious challenges to autonomous robots. Unlike fixed industrial settings, these dynamic surroundings require robots to make real-time decisions based on incomplete or rapidly changing data. To safely handle such variability, sophisticated AI systems must be in place to predict behaviors and modify navigation strategies on the fly. Without this adaptability, the risk of collisions, navigation failure, or operational delays increases significantly.

Sensor Limitations and Failures: Autonomous systems rely heavily on sensors like LiDAR, cameras, and radar to perceive the world, but these sensors are not foolproof. Environmental factors such as darkness, fog, rain, dust, or physical obstructions can degrade sensor performance, leading to inaccurate perception or localization errors. To mitigate this, systems must implement redundancy and advanced sensor fusion techniques to cross-verify data and maintain reliability. However, achieving this robustness often increases the hardware complexity and cost of the robot.

Safety and Ethical Concerns: As autonomous robots become more integrated into public and private spaces, ensuring that their decisions do not result in harm becomes a top concern. AI-driven navigation must align with ethical standards, ensuring that robots act in ways that are fair, transparent, and justifiable. Questions like “Who is responsible in the event of an autonomous failure?” remain unresolved in many legal systems. Developers must take proactive steps to embed explainability, accountability, and safety protocols into every layer of the robot’s decision-making architecture.

High Computational Requirements: Navigating complex environments in real time requires immense computational power to process sensory inputs, run AI models, and control movement. Onboard computers must manage this without overheating, draining batteries, or slowing performance. While edge AI chips such as NVIDIA Jetson or Google Coral offer powerful solutions, they still face limitations in resource-constrained environments. Striking the right balance between processing speed, energy efficiency, and physical size is a continual design challenge for robotic engineers.

Regulatory and Legal Hurdles: One of the biggest non-technical obstacles to deploying autonomous systems lies in inconsistent legal and regulatory frameworks across countries and industries. Proving the safety, reliability, and predictability of autonomous machines under varying real-world conditions is essential but difficult. As a result, innovation is often delayed by lengthy approval cycles, compliance testing, and uncertainty about liability in case of errors. Although regulatory bodies and governments are actively working on clearer standards, progress remains uneven globally.

Recent Innovations and Future Trends

The field of autonomous navigation continues to evolve rapidly with breakthrough innovations:

Edge AI for On-Device Decision-Making: Edge AI allows robots to process data locally, reducing their dependence on cloud connectivity and improving decision-making speed. This decentralized approach minimizes latency and enhances operational reliability, especially in offline or remote environments where connectivity is sparse. Robots equipped with edge computing can quickly respond to dynamic conditions such as avoiding a sudden obstacle or rerouting in emergencies making it ideal for drones, delivery bots, and mobile healthcare assistants. It also enhances data privacy, as sensitive information doesn’t need to leave the device.

Multi-Agent and Swarm Navigation: Coordinated fleets of autonomous robots often referred to as multi-agent systems or swarms are redefining how large-scale robotic operations are handled. These robots communicate with one another in real-time to share mapping data, distribute tasks, and avoid collisions. Applications range from warehouse logistics to agricultural field scanning and even military reconnaissance. For these systems to succeed, developers must focus on robust communication protocols, decentralized decision-making, and dynamic conflict resolution strategies.

Neurosymbolic AI for Reasoning: Neurosymbolic AI combines the pattern recognition strengths of neural networks with the logical structure of symbolic reasoning, creating a hybrid model ideal for high-level decision-making. This allows robots not only to learn from data but also to reason about abstract principles such as safety, legality, and ethical conduct. As transparency and trust become more important in autonomous systems, neurosymbolic AI helps make robot behavior more interpretable and accountable. Its growing use in healthcare robotics, autonomous vehicles, and legal tech underscores its value in sensitive decision domains.

Bio-Inspired Navigation Models: Nature offers countless models of efficient movement and problem-solving, and robotics is increasingly borrowing from these biological systems. Robots are now being designed to emulate the navigation strategies of ants (pheromone-based paths), bats (echolocation), and birds (flocking behavior), among others. These models require less computational power and fewer sensors, making them ideal for small, low-energy robots used in search-and-rescue missions or environmental monitoring. Bio-inspired approaches also provide greater resilience in visually or GPS-compromised environments.

Human-Robot Interaction and Shared Autonomy: The future of autonomous navigation is not just about independence it’s about collaboration between humans and machines. In shared autonomy models, robots may suggest actions while humans approve, adjust, or override them, creating a safer and more interactive user experience. This is particularly valuable in mission-critical areas like construction, surgery, or eldercare, where human expertise is essential but robotic support can enhance precision and efficiency. Designing systems for intuitive communication and trust is key to unlocking this collaborative future.

Conclusion

Autonomous navigation is the cornerstone of intelligent robotics, enabling machines to perceive their environment, make real-time decisions, and move with purpose across complex and dynamic spaces. Whether it’s a robot in a disaster zone, a drone monitoring farmland, or a self-driving car navigating city streets, this technology is reshaping how we interact with machines and integrate them into daily life. As AI models become more sophisticated and hardware more accessible, robots are evolving from mere tools into intelligent agents capable of operating independently. The journey toward seamless, safe, and scalable autonomous navigation is accelerating, unlocking vast possibilities for innovation across industries and society.

- https://kabam.ai/blog/how-robot-autonomous-navigation-helps-make-smart-decisions/

- https://www.mdpi.com/1424-8220/24/18/5925

- https://www.researchgate.net/publication/387743724_The_Rise_of_Autonomous_Robots_AI-Driven_Navigation_and_Control

- https://www.numberanalytics.com/blog/autonomous-navigation-in-robotics

- https://link.springer.com/article/10.1007/s41870-025-02500-5

Subscribe

Select topics and stay current with our latest insights

- Functions