- Industries

Industries

- Functions

Functions

- Insights

Insights

- Careers

Careers

- About Us

- Technology

- By Omega Team

Data is being generated at an unprecedented scale from smartphones and IoT sensors to autonomous vehicles and smart factories. Traditionally, this data traveled to centralized cloud servers for processing and analysis. However, as industries demand real-time decision-making and stronger data privacy, a new paradigm has emerged: Edge AI. Edge AI, or Artificial Intelligence at the Edge, refers to running AI algorithms directly on local devices such as cameras, sensors, gateways, or embedded systems rather than relying solely on the cloud. This shift is transforming how organizations collect, process, and act on data, enabling faster insights, reduced latency, enhanced security, and smarter operations. By minimizing dependence on cloud connectivity, Edge AI ensures business continuity even in low-network environments. It is rapidly becoming a cornerstone technology for next-generation applications across healthcare, manufacturing, transportation, and smart cities.

What is Edge AI?

Edge AI is the convergence of edge computing and artificial intelligence, allowing data to be analyzed and acted upon directly where it is generated on local devices such as sensors, cameras, gateways, or embedded systems. Unlike traditional cloud-based AI, where raw data must travel to distant servers for processing, Edge AI processes information locally. This approach minimizes latency, enhances privacy, and supports faster decision-making, making it ideal for applications that require immediate responses or operate in environments with limited connectivity. By embedding AI capabilities within edge devices, organizations can achieve greater efficiency, scalability, and resilience. It also reduces dependence on high-bandwidth networks and lowers operational costs by minimizing cloud usage. As a result, Edge AI is becoming a key enabler of real-time analytics, automation, and intelligent decision-making across industries.

How Edge AI Works

Edge AI systems typically involve three core components that work together to bring intelligence closer to where data is generated and used. These components ensure seamless local processing, communication, and model execution, enabling devices to operate efficiently even with limited connectivity.

Edge Devices: These are the hardware endpoints such as IoT sensors, drones, wearables, cameras, and smartphones that collect and process data in real time. They are equipped with specialized chips or accelerators like GPUs, TPUs, or NPUs to run lightweight AI models directly on the device. By handling computation locally, these devices minimize latency, reduce data transmission costs, and ensure faster, context-aware responses. Edge devices are the foundation of Edge AI, turning ordinary machines into intelligent systems capable of making autonomous decisions.

AI Models: These models are developed and trained in the cloud or on high-performance servers using vast datasets. Once trained, they are optimized for edge deployment through model compression techniques such as quantization, pruning, or knowledge distillation to reduce their size and computational load. This optimization ensures that models can run efficiently on resource-constrained devices without compromising accuracy. Continuous updates and retraining cycles allow these models to learn from new data, improving performance and adaptability over time.

Edge Infrastructure: This layer includes gateways, routers, and microservers that connect edge devices to centralized systems or the cloud. The infrastructure ensures smooth data flow, synchronization, and security management between distributed nodes. It also handles tasks like firmware updates, workload balancing, and real-time coordination across devices. By enabling hybrid processing where some data is analyzed locally and some in the cloud edge infrastructure ensures optimal performance, scalability, and system reliability across diverse environments.

Key Benefits of Edge AI

Real-Time Decision-Making: Latency can make or break AI applications. With computation happening locally, Edge AI ensures instant insights, crucial for autonomous systems, healthcare diagnostics, and predictive maintenance. By eliminating the delay caused by data transmission to distant cloud servers, edge processing supports time-critical use cases where even milliseconds matter. This immediate response capability enhances safety, operational control, and efficiency across domains like robotics, smart grids, and transportation. Real-time analytics also enable organizations to act proactively rather than reactively, turning data into instant, actionable intelligence.

Enhanced Privacy and Security: Sensitive data (like facial recognition or health metrics) doesn’t leave the device, minimizing exposure to cyber risks and ensuring compliance with privacy regulations like GDPR. Local data processing reduces the attack surface and prevents potential data breaches that might occur during cloud transmission. Additionally, encrypted communication protocols and hardware-level security modules can further protect on-device AI systems from unauthorized access. This built-in privacy-by-design approach makes Edge AI particularly valuable in regulated industries such as finance, defense, and healthcare.

Reduced Bandwidth Costs: Since only relevant or summarized information is sent to the cloud, organizations save significantly on data transmission costs and avoid network congestion. This reduction in bandwidth usage is especially beneficial in environments with limited connectivity or large-scale IoT deployments. Edge AI optimizes data flow by filtering out redundant or non-essential data, improving overall system efficiency. Over time, this approach helps businesses scale their AI operations more economically while maintaining high performance and responsiveness.

Operational Resilience: Edge AI enables systems to function even when internet connectivity is limited, ideal for remote areas, industrial sites, or critical infrastructure. Because AI models operate locally, decision-making continues uninterrupted during outages or latency spikes. This self-sufficiency ensures reliability in mission-critical applications like defense operations, oil rigs, and offshore facilities. Moreover, organizations gain greater control over uptime and system stability, minimizing downtime and ensuring continuity in demanding operational environments.

Energy Efficiency: Modern edge chips (such as NVIDIA Jetson, Google Coral, or Intel Movidius) are optimized for low power consumption while maintaining high inference speeds. These specialized processors allow devices to execute complex AI tasks without draining excessive energy, extending battery life and reducing environmental impact. Edge AI also helps optimize resource allocation, as processing is distributed intelligently across devices rather than centralized in power-hungry data centers. The result is a sustainable, high-performance ecosystem that balances speed, cost, and energy efficiency.

Edge AI in Action: Real-World Applications

Smart Manufacturing: Factories use Edge AI for predictive maintenance, quality control, and machine vision, reducing downtime and improving productivity. By analyzing sensor data directly on the production floor, machines can detect anomalies, predict equipment failures, and trigger maintenance before breakdowns occur. This local intelligence enables faster corrective actions and continuous optimization of workflows. Over time, manufacturers can achieve higher yield rates, lower costs, and more consistent product quality with minimal human intervention.

Healthcare: Wearables and diagnostic devices use on-device AI for real-time patient monitoring, detecting irregularities like arrhythmia without constant cloud communication. This allows healthcare professionals to receive instant alerts and intervene before conditions worsen. Edge AI also enhances data privacy, as sensitive patient information is processed locally, reducing the risk of leaks. In hospitals, smart imaging systems and bedside devices equipped with edge intelligence improve diagnosis speed and accuracy, transforming the way care is delivered.

Retail: Retailers deploy smart cameras and sensors to analyze foot traffic, inventory, and shopper behavior locally, delivering faster insights and personalized experiences. Edge AI enables dynamic pricing, demand forecasting, and checkout-free shopping by processing data in real time. It can also enhance security by identifying theft or unusual activity instantly. By reducing cloud dependency, retailers improve both customer experience and operational efficiency, ensuring seamless in-store analytics and better business outcomes.

Autonomous Vehicles: Self-driving cars rely on Edge AI for processing lidar, radar, and camera data instantaneously to ensure safety and navigation accuracy. Since every second counts on the road, on-device AI models interpret traffic conditions, pedestrians, and obstacles in real time without relying on external networks. This enables rapid decision-making for braking, lane changes, and collision avoidance. Edge AI’s local processing also ensures reliability in areas with poor connectivity, making autonomous driving safer and more dependable.

Smart Cities: From traffic lights that adapt to congestion to waste bins that signal when they’re full, Edge AI powers efficient, connected urban systems. Localized AI processing helps reduce traffic jams, optimize energy usage, and improve public safety by analyzing data from thousands of sensors and cameras across the city. This real-time responsiveness allows cities to become more sustainable, livable, and adaptive to changing conditions. By decentralizing intelligence, Edge AI lays the groundwork for truly autonomous urban ecosystems.

Challenges of Edge AI Implementation

Hardware Limitations: Edge devices often have constrained processing power, memory, and storage capacity compared to cloud servers. Running complex AI models requires efficient hardware design and specialized chips that balance speed with low energy consumption. Additionally, deploying and maintaining these devices in diverse environments like factories or outdoor sites can be costly and technically demanding. Organizations must invest in robust, scalable infrastructure to overcome these limitations effectively.

Model Optimization: AI models must be compressed without compromising accuracy to perform efficiently on resource-limited devices. Techniques such as quantization, pruning, and knowledge distillation are used to reduce model size while retaining critical features. However, achieving the right trade-off between performance and precision can be complex. Continuous retraining and updating of models are also essential to ensure that edge systems remain accurate and adaptive to new data patterns.

Scalability: Managing and updating distributed AI models across thousands of devices requires robust orchestration and monitoring tools. Synchronizing updates, maintaining version control, and ensuring consistent performance across devices can be challenging. Cloud-edge collaboration frameworks are often needed to streamline large-scale deployments. Without efficient management, organizations risk fragmented systems and inconsistent data insights, limiting the scalability of their Edge AI solutions.

Security: Devices at the edge can be vulnerable to tampering, physical attacks, and cyber intrusions. Ensuring device authentication, encrypted communication, and secure firmware updates is crucial for maintaining system integrity. Additionally, because edge environments are often decentralized and exposed, they require advanced threat detection and anomaly monitoring mechanisms. A comprehensive security strategy that integrates both hardware and software protections is essential to safeguard Edge AI ecosystems.

The Future of Edge AI

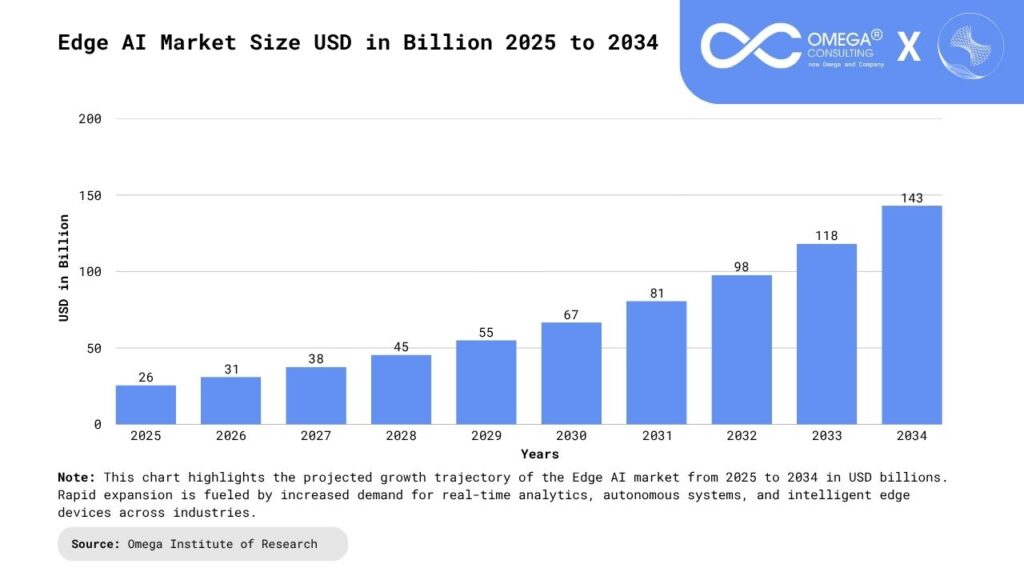

Edge AI is expected to grow rapidly as industries embrace distributed intelligence. According to Marketsand Markets, the global Edge AI market is projected to exceed $100 billion by 2030, fueled by advances in 5G, tinyML, and AI chip innovation.

Federated Learning: Federated learning enables AI models to be trained across multiple edge devices without centralizing the raw data. Instead of sending data to a central server, devices collaboratively learn while keeping data local, ensuring privacy and security. This decentralized approach allows organizations to build more robust and diverse AI models using distributed datasets from various environments. In the coming years, federated learning will be crucial for privacy-preserving AI in sectors like healthcare, finance, and smart cities, where data sensitivity and compliance are top priorities.

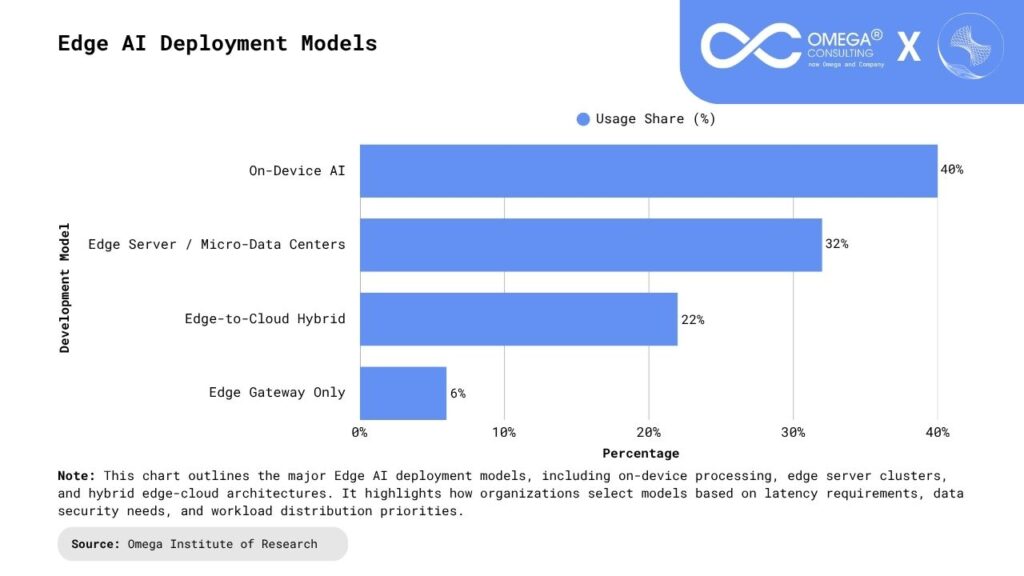

Edge-to-Cloud Continuum: The future of AI will not be a battle between edge and cloud but a synergistic relationship between them. The edge-to-cloud continuum enables seamless data flow and workload distribution where critical tasks are handled locally, and deeper analytics or model training occur in the cloud. This hybrid approach maximizes efficiency, scalability, and cost-effectiveness. Emerging orchestration tools and containerized AI deployments will further simplify management across this continuum, creating an intelligent infrastructure that adapts dynamically to workloads and network conditions.

AI-Powered Networks: Telecom operators are increasingly using Edge AI to optimize network performance, predict failures, and enhance user experience. By processing data closer to network nodes, Edge AI enables real-time traffic management, anomaly detection, and adaptive resource allocation. This intelligent automation improves reliability, reduces downtime, and supports the massive data loads expected from 5G and IoT ecosystems. In the near future, AI-powered networks will become self-healing and self-optimizing, capable of learning from usage patterns and proactively maintaining high service quality.

Energy-Aware Edge Computing: As sustainability becomes a global priority, energy-efficient Edge AI solutions are emerging as a key focus area. Energy-aware edge computing leverages low-power AI chips, dynamic load balancing, and intelligent energy scheduling to minimize power consumption. These advancements not only lower operational costs but also help reduce the carbon footprint of large-scale AI deployments. By combining renewable energy sources and smart energy management, future edge ecosystems will deliver high performance with minimal environmental impact, aligning with global sustainability goals.

Conclusion

Edge AI is more than just a technological shift; it’s a strategic advantage that empowers organizations to process data closer to its source, unlocking real-time insights, enhanced privacy, and greater operational agility. As AI models become more lightweight and hardware continues to advance, Edge AI will revolutionize industries such as healthcare, manufacturing, transportation, and smart infrastructure by enabling faster, more intelligent decision-making. Businesses that adopt this technology early will gain a competitive edge, driving innovation, efficiency, and resilience in an increasingly connected and data-driven digital age. The fusion of Edge AI with 5G, IoT, and cloud computing will further accelerate automation and intelligent ecosystems across sectors. Over time, this decentralized intelligence will redefine how humans and machines collaborate, creating more responsive, sustainable, and adaptive systems. Edge AI represents not just the next step in AI evolution but the foundation of a smarter, faster, and more resilient digital future.

- https://www.sciencedirect.com/science/article/pii/S2667345223000196

- https://arxiv.org/abs/2407.04053

- https://ieeexplore.ieee.org/abstract/document/10621659

- https://www.splunk.com/en_us/blog/learn/edge-ai.html

- https://www.computer.org/csdl/magazine/ic/2024/04/10621659/1Z5lGDb639C

Subscribe

Select topics and stay current with our latest insights

- Functions