- Industries

Industries

- Functions

Functions

- Insights

Insights

- Careers

Careers

- About Us

- Technology

- By Omega Team

The past decade of AI progress has been driven by deep learning models that rely heavily on massive datasets and intensive computation to achieve high accuracy in tasks like image classification, language translation, and human-like text generation. However, many real-world industries such as healthcare, robotics, defense, and manufacturing often face data scarcity due to privacy concerns, high costs, or the rarity of specific events, making model training a significant challenge. This is where Few-shot and Zero-shot Learning (FSL and ZSL) redefine the landscape of AI by enabling models to generalize and adapt with limited or even no labeled examples. These techniques bring AI closer to human-like intelligence — the ability to learn new concepts and perform tasks effectively with minimal exposure to data — marking a new frontier in data-efficient AI.

Understanding the Shift: From Data-Hungry to Data-Efficient AI

Traditional machine learning workflows follow a predictable pattern: collect thousands of samples, label them, and train a model through repeated optimization cycles. This approach works well in data-rich environments, but it struggles in scenarios where data is:

Expensive (medical images, defense data): In fields like healthcare and defense, acquiring large volumes of labeled data is costly due to specialized equipment, expert annotation needs, and regulatory constraints. This makes traditional data-hungry AI approaches impractical. Data-efficient methods help reduce dependency on massive datasets by learning effectively from limited examples. They allow organizations to accelerate innovation without waiting for large-scale data collection.

Private (patient or financial data): Sensitive data such as patient records or financial transactions cannot be freely shared or labeled due to strict privacy laws and ethical considerations. Data-efficient AI allows learning from limited, anonymized, or synthetic data, preserving privacy while maintaining model accuracy. This makes it possible to develop compliant and secure AI systems in regulated industries.

Rare (anomalies or new product categories): Some events or categories occur infrequently, such as equipment failures or novel products, resulting in insufficient training examples. Few-shot and Zero-shot Learning enable AI systems to recognize or predict these rare cases by generalizing from minimal prior experience. This capability helps organizations detect outliers and respond to new scenarios faster.

Continuously changing (new words, customer trends, or threats): Dynamic environments, like social media language or cybersecurity, evolve rapidly, making static datasets obsolete. Data-efficient models can adapt quickly to new trends and information with minimal retraining, ensuring long-term relevance. Such adaptability enhances AI’s usefulness in fast-paced, real-time applications.

What is Few-shot Learning?

Few-shot Learning (FSL) is a machine learning approach that enables models to learn new tasks or recognize new categories using only a handful of labeled examples per class — typically between one and ten. Unlike traditional models that require extensive retraining and large datasets, few-shot learning leverages prior knowledge to quickly adapt to unfamiliar situations. It mirrors the way humans learn, applying past experiences and understanding to interpret new information without starting from scratch, making AI systems more efficient, flexible, and closer to human-like learning.

Meta-learning: Meta-learning trains models to quickly adapt to new tasks by exposing them to numerous small tasks during training, each containing limited examples. Instead of memorizing specific data, the model learns how to learn, developing general strategies that can be applied across new domains. This approach mirrors human adaptability, where prior experiences guide future learning. A well-known example is Model-Agnostic Meta-Learning (MAML), which enables models to adjust to new tasks with minimal fine-tuning, demonstrating strong flexibility and efficiency.

Transfer Learning: Transfer learning begins with pre-training a model on a large, diverse dataset such as ImageNet or BERT to learn broad, universal patterns like edges, shapes, or grammar structures. Once trained, the model is fine-tuned using only a few samples from a new task, allowing it to transfer its existing knowledge efficiently. This method dramatically reduces data and computational requirements while maintaining high accuracy. It’s one of the most practical and widely adopted few-shot learning techniques in modern AI applications.

Similarity or Metric-based Learning: Similarity-based learning methods, including Siamese Networks, Prototypical Networks, and Matching Networks, focus on comparing relationships between data points rather than learning from vast labeled datasets. Each class is represented as a prototype in a high-dimensional vector space, allowing the model to identify new examples by measuring their distance to these prototypes. This process mimics how humans recognize objects or concepts based on similarity. As a result, the model can classify new data effectively even with very few examples per category.

What is Zero-shot Learning?

Zero-shot Learning (ZSL) is an advanced machine learning approach that enables models to recognize or perform tasks they have never encountered before, without requiring any labeled examples of the new classes. It relies on semantic understanding, allowing the model to connect known concepts to unfamiliar ones using linguistic, contextual, or visual relationships. By leveraging this ability to infer meaning, ZSL allows AI systems to generalize knowledge across unseen categories, making them more adaptable and closer to human-like reasoning.

Semantic Embeddings: Zero-shot learning relies on shared vector spaces, such as word or image embeddings, to represent both classes and features in a common framework. For example, if a model understands the concepts of “horse” and “stripes,” it can infer what a “zebra” is by analyzing their semantic relationships within the embedding space. This enables the model to recognize unseen categories based on the contextual meaning of known words or features, bridging the gap between known and unknown data.

Attribute-based Reasoning: In this approach, the model associates objects with descriptive attributes rather than relying on direct examples. For instance, it may be known that a “horse” has four legs, a mane, and hooves, while a “zebra” is described as a horse-like animal with stripes. By connecting these shared characteristics, the model can correctly identify a zebra without ever having seen one during training. This attribute-based reasoning mimics human intuition, where we infer unknown objects through logical associations.

Large-scale Pretraining: Modern Zero-shot Learning is largely driven by powerful foundation models such as GPT, CLIP, or T5, which are trained on enormous datasets containing diverse text-image pairs. These models develop a rich conceptual understanding of language, vision, and context, enabling them to perform unseen tasks without explicit training. Through techniques like prompt-based inference, they can apply learned knowledge flexibly across domains, allowing AI to generalize beyond the boundaries of its original training data.

Real-world Applications

Natural Language Processing (NLP): Few-shot and zero-shot learning have transformed NLP, particularly through large language models like GPT, BERT, and T5. In few-shot prompting, providing a few examples of the desired behavior in a prompt guides the model to follow that pattern. Zero-shot prompting, in contrast, allows the model to perform tasks from clear instructions alone, such as translating text or summarizing content. These techniques enable advanced NLP capabilities, including text generation, classification, summarization, and question-answering with minimal supervision.

Computer Vision: Vision-language models such as CLIP and ALIGN leverage zero-shot learning to connect visual and textual understanding, allowing models to identify new image categories based solely on text descriptions. They enable dynamic content moderation and perform open-world object recognition where classes are not predefined. This flexibility allows AI systems to generalize to new visual concepts without requiring extensive labeled datasets. As a result, computer vision applications become more adaptive and scalable across diverse domains.

Healthcare: Few-shot learning plays a critical role in healthcare by enabling rare disease detection where limited medical images exist and supporting personalized diagnostics with minimal patient data. It also accelerates drug discovery, allowing new compounds to be classified and analyzed quickly with scarce training samples. By learning effectively from small datasets, few-shot methods improve decision-making in medical and pharmaceutical applications while reducing the dependency on large, annotated datasets.

Robotics and Autonomous Systems: Zero-shot reasoning allows robots and autonomous vehicles to adapt to unseen environments or novel objects without explicit retraining. They can perform new tasks, such as picking up unfamiliar objects, by relying on learned contextual reasoning rather than memorized scenarios. This ability to generalize enhances safety, efficiency, and flexibility in autonomous operations. It enables intelligent machines to navigate and interact with the real world more like humans do.

Customer Experience and Chatbots: Few-shot and zero-shot prompting significantly improve conversational AI systems by helping them understand new intents, such as trending phrases or emerging product names, without extensive retraining. They allow chatbots to provide context-aware, accurate responses and scale efficiently across multiple domains and languages. This capability enhances customer engagement and ensures AI-driven support remains adaptable to rapidly changing user needs.

The Role of Generative AI

Generative AI models like GPT-4, GPT-5, Claude, and Gemini have showcased powerful few-shot and zero-shot capabilities through prompt engineering, using language as the primary interface for learning new tasks. In few-shot prompting, users provide a few examples within the prompt, allowing the model to learn instantly, such as translating sentences into another language with just two or three examples. Zero-shot prompting, on the other hand, enables the model to perform tasks directly from natural language instructions, like summarizing a paragraph in a single sentence, without any prior examples. This shift transforms AI interaction from traditional data-driven training to instruction-driven reasoning, making models more flexible, intuitive, and human-like in their understanding. It also reduces the dependency on large labeled datasets and accelerates the deployment of AI across diverse domains, from content creation and translation to customer support and decision-making tasks.

Challenges and Limitations

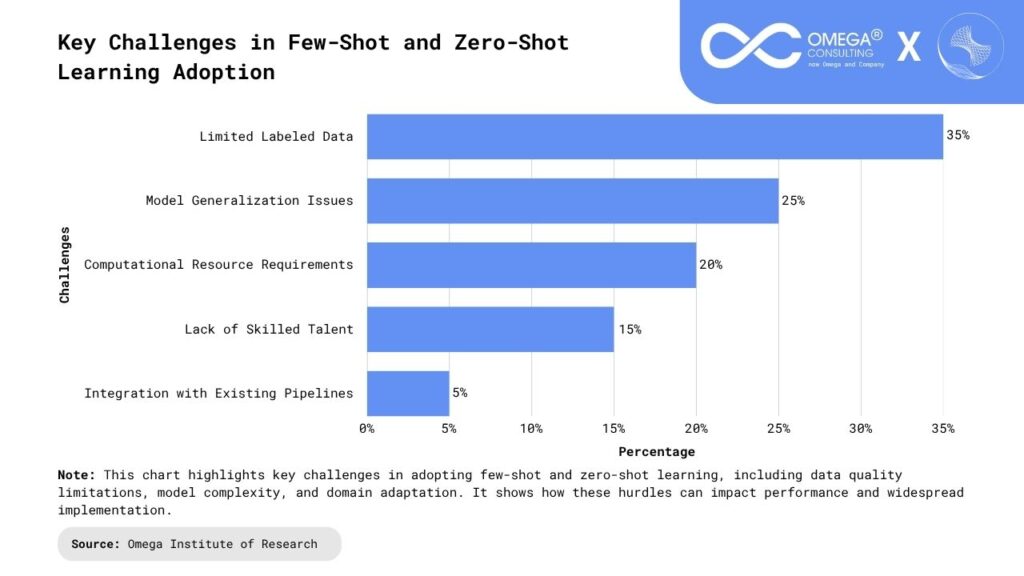

These models heavily rely on pretrained data, which can carry inherent biases from the source datasets, potentially influencing predictions and leading to unfair or skewed outcomes, making careful dataset curation and bias-mitigation strategies essential. Domain shift is another challenge, as limited examples may not capture the full diversity of real-world scenarios, causing models to struggle with tasks that differ significantly from their pretraining domains, which affects robustness and reliability. Interpretability also remains difficult, particularly in safety-critical applications like healthcare or autonomous systems, since the decision-making process of these models is often opaque and hard to explain. Traditional evaluation metrics such as accuracy are insufficient to measure generalization, adaptability, and reasoning, highlighting the need for new task-agnostic frameworks. Additionally, ethical concerns arise from large-scale pretraining on internet data, including misinformation, cultural bias, and data ownership issues, necessitating careful monitoring, ethical guidelines, and responsible AI practices to ensure fairness and accountability.

The Future: Toward True General Intelligence

Few-shot and zero-shot learning represent critical steps toward achieving Artificial General Intelligence (AGI), enabling AI to think, reason, and adapt across diverse domains. Future advancements are expected to focus on multimodal generalization, where models integrate vision, speech, text, and sensory data to gain a richer understanding of the world. Continual learning will allow AI systems to update their knowledge over time without suffering from catastrophic forgetting, maintaining performance across evolving tasks. Additionally, causal and contextual reasoning will help models understand not just what happens, but why, while ethical guardrails will ensure that AI adapts responsibly and safely across new and unforeseen contexts. These developments will make AI more human-like in its reasoning, capable of complex decision-making, and increasingly useful in real-world scenarios, from scientific research to personalized assistance. The integration of these capabilities points toward a future where AI can autonomously learn, generalize, and apply knowledge across multiple domains with minimal human intervention.

Conclusion

Few-shot and zero-shot learning are redefining the way machines learn by enabling models to perform tasks with minimal examples, significantly reducing data dependency while enhancing adaptability and generalization across domains. These approaches are transforming applications ranging from diagnosing rare diseases to powering conversational AI, bringing us closer to AI that learns like humans—efficiently, flexibly, and intelligently. By allowing models to leverage prior knowledge, reason contextually, and generalize to new tasks, they lay the groundwork for more versatile and human-like intelligence. In an era where adaptability is a critical advantage, few-shot and zero-shot learning are not merely technical innovations but the foundation for the next generation of intelligent systems.

- https://ieeexplore.ieee.org/document/10701609

- https://medium.com/@sahin.samia/few-shot-and-zero-shot-learning-teaching-ai-with-minimal-data-801603ed40f8

- https://pmc.ncbi.nlm.nih.gov/articles/PMC6375489/

- https://neptune.ai/blog/zero-shot-and-few-shot-learning-with-llms

- https://www.xcubelabs.com/blog/exploring-zero-shot-and-few-shot-learning-in-generative-ai/

Subscribe

Select topics and stay current with our latest insights

- Functions