- Industries

Industries

- Functions

Functions

- Insights

Insights

- Careers

Careers

- About Us

- Technology

- By Omega Team

Prompt engineering is a crucial technique for optimizing generative AI models like GPT, DALL·E, and Stable Diffusion to produce accurate, useful, and creative outputs. As AI continues to evolve, the ability to craft well-structured prompts has become essential across industries such as content creation, programming, marketing, education, and research. Effective prompt design enhances productivity, automates tasks, and drives innovation by improving AI-generated responses. By understanding how different prompts influence model behavior, users can refine outputs to align with specific goals, whether for generating high-quality content, writing efficient code, or assisting in data analysis. Moreover, as AI systems become more sophisticated, prompt engineering is evolving into a critical skill for optimizing interactions between humans and machines. This article explores the fundamentals of prompt engineering, including its definition, significance, key techniques, industry applications, challenges, and future trends, providing insights into how individuals and businesses can leverage this skill to maximize the potential of AI-driven solutions.

What is Prompt Engineering?

Prompt engineering refers to the process of designing and refining input text to guide AI models in generating desired responses. Since AI models rely on textual instructions to produce results, the structure, wording, and context of a prompt significantly influence the quality, accuracy, and relevance of the output. Effective prompt engineering minimizes ambiguity, enhances precision, and reduces the need for extensive post-processing, ultimately improving efficiency and user experience. It can be considered both an art and a science, requiring a deep understanding of how AI models interpret language and respond to different types of input. By strategically crafting prompts, users can shape AI-generated content for various purposes, from generating creative writing and marketing copy to automating coding tasks and conducting research. The approach can range from simple adjustments in phrasing to complex multi-turn dialogues designed to refine the AI’s understanding of a query. Additionally, advanced prompt engineering techniques, such as using contextual cues, role-based prompting, and few-shot learning, allow users to exert greater control over AI-generated responses, making it a powerful tool for maximizing the potential of generative AI models across industries.

Why is Prompt Engineering Important?

Maximizing AI Performance: A well-crafted prompt helps AI generate more accurate and relevant responses, avoiding unnecessary or off-topic content. By structuring prompts effectively, users can guide AI to focus on key details, improving the precision of generated outputs. This is particularly valuable in applications such as automated customer support, data analysis, and creative content generation, where clarity and specificity are crucial.

Reducing Errors and Bias: Carefully designed prompts minimize ambiguities and biases in AI outputs, ensuring that responses are aligned with the intended purpose. Since AI models learn from vast datasets, poorly structured prompts can lead to misleading or biased results. By refining prompt wording and context, users can mitigate unintended biases and enhance the fairness and reliability of AI-generated content, making it more suitable for professional and ethical use.

Enhancing Productivity: Precise prompts save time by reducing the need for multiple iterations or edits. This is especially important in fields like content generation and software development, where efficiency is key. Well-optimized prompts enable AI to deliver high-quality drafts, summaries, or code snippets faster, allowing professionals to focus on strategic tasks rather than manual refinements, ultimately increasing workflow efficiency.

Broad Applications: Prompt engineering benefits various industries, including healthcare, education, finance, and entertainment, helping AI systems become more effective across multiple domains. In healthcare, for example, AI-powered diagnostic tools rely on precise prompts to generate accurate assessments, while in finance, structured prompts help AI analyze market trends. The versatility of prompt engineering ensures that AI can adapt to industry-specific needs, unlocking new possibilities for automation and innovation.

Key Techniques in Prompt Engineering

Clarity and Specificity: Providing clear and specific instructions leads to more accurate outputs. Vague or ambiguous prompts can produce inconsistent or off-topic responses, making it difficult to achieve the desired results. A well-defined prompt reduces the risk of misinterpretation and ensures that the AI delivers meaningful and contextually appropriate responses. For instance, specifying “Write a 500-word article on renewable energy trends with recent statistics” is far more effective than simply asking, “Tell me about renewable energy.” Additionally, using direct language and avoiding overly broad requests ensures that the AI stays focused on the intended subject matter.

Role-Based Instructions: Assigning a role to the AI helps tailor the response style and depth. This technique is particularly useful in professional or educational applications where different tones, perspectives, or levels of detail are required. For example, prompting AI with “Act as a financial analyst and provide a market trend report” will generate a more structured and analytical response compared to a general request. Role-based prompts help maintain consistency and ensure the AI aligns with the intended audience and purpose. Furthermore, defining the AI’s role allows for more industry-specific and technical outputs, enhancing the relevance of the generated content.

Using Constraints: Specifying format, length, tone, or style helps control the AI’s response. This is useful for aligning AI-generated content with specific use cases, such as formal reports, casual blog posts, or structured code snippets. For example, requesting “Summarize this research paper in 200 words using a formal tone” ensures brevity and professionalism. Constraints guide AI output to fit industry standards, making content more usable and reducing unnecessary revisions. Moreover, setting strict parameters can help maintain brand voice consistency in marketing, technical documentation, and corporate communications.

Providing Context: Adding background information improves response relevance. If the AI lacks context, it may generate generic or inaccurate responses, leading to suboptimal results. For instance, instead of asking, “Explain machine learning,” a better prompt would be, “Explain machine learning to a non-technical audience with examples from healthcare and finance.” Providing relevant context ensures the AI tailors responses appropriately for different users and use cases. Additionally, adding details such as target audience, purpose, and specific areas of focus further refines AI outputs, making them more aligned with user expectations.

Step-by-Step Breakdown: Breaking down complex prompts into steps improves the logical flow of responses. This technique is particularly helpful in technical writing, problem-solving, and structured analysis. For example, rather than asking, “How do I build a website?” a more effective prompt would be, “Explain the process of building a website, covering domain registration, hosting, front-end development, and back-end setup.” Step-by-step instructions help AI generate more structured, coherent, and actionable responses. Additionally, this method helps guide AI in answering multi-part queries logically, reducing the risk of fragmented or incomplete responses.

Few-Shot and Zero-Shot Learning: Few-shot learning provides examples to guide AI behavior, while zero-shot relies on AI’s general understanding. This method helps refine responses for specific scenarios, reducing the trial-and-error process. For example, a few-shot prompt like “Here are two examples of a persuasive product description. Now write one for a smartwatch” helps AI mimic the desired writing style. In contrast, zero-shot learning is useful for broad topics where AI needs to generate responses without prior examples, making it essential for flexible, adaptable AI applications. Additionally, incorporating structured examples in few-shot learning can enhance AI’s ability to generate industry-specific or creative responses with greater precision.

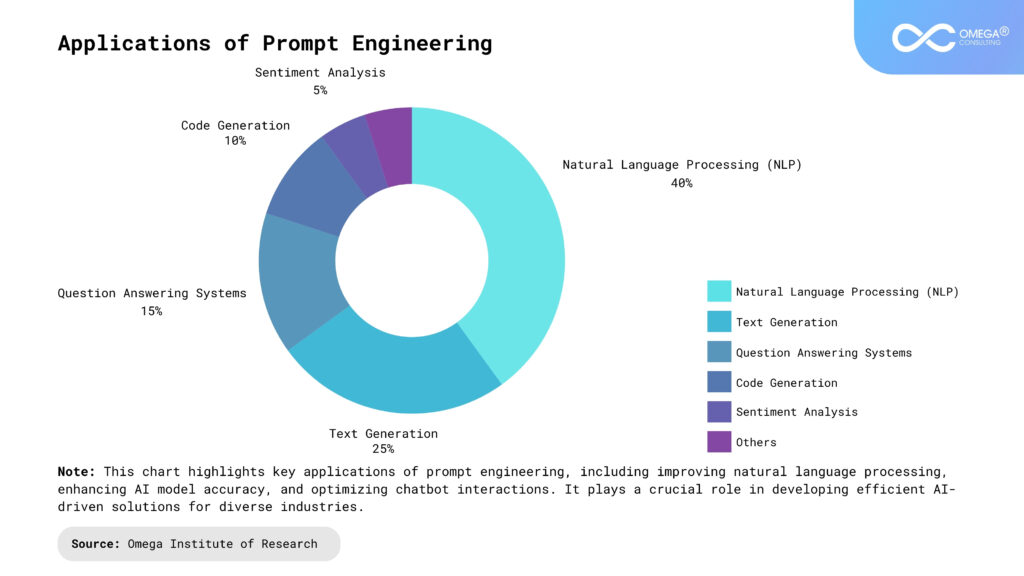

Applications of Prompt Engineering

Content Creation: Assisting in generating high-quality blog posts, social media content, and marketing copy. It helps in brainstorming new ideas, improving storytelling, and structuring articles effectively, making the writing process more efficient. Additionally, it reduces writer’s block by providing structured suggestions and refining drafts to enhance readability and engagement. AI-powered tools can also help optimize SEO strategies by generating keyword-rich content, improving online visibility. Moreover, prompt engineering ensures brand consistency by maintaining a consistent tone, voice, and messaging across different platforms.

Coding and Software Development: Prompt engineering facilitates the generation of code snippets, documentation, and automated test cases. It assists developers in debugging and optimizing code efficiency by providing structured solutions and recommendations. AI-driven coding assistants help streamline development workflows, reducing the time spent on repetitive coding tasks. Additionally, AI can generate explanations and suggest alternative approaches, helping developers learn new programming concepts. By improving efficiency and accuracy, prompt engineering enhances developer productivity, making software development more agile and scalable.

Education and Learning, AI-driven tutoring enables personalized learning experiences across various subjects and skill levels. It enhances interactive learning by generating quizzes, explanations, and summaries, making complex topics more accessible. Prompt engineering supports language learning through AI-powered translation and contextual language exercises. Furthermore, AI can analyze student performance and adapt lesson plans accordingly, offering a customized learning experience. This ensures students receive targeted assistance in areas where they need the most improvement, fostering better comprehension and engagement.

Customer Support and Chatbots: Optimized prompts improve chatbot interactions by ensuring responses are clear, relevant, and helpful. AI-driven chatbots can provide better personalization by tailoring responses based on user queries, improving customer satisfaction. Faster response times and more accurate replies enhance the overall customer service experience. Additionally, AI-powered bots can handle multiple customer inquiries simultaneously, reducing wait times and improving operational efficiency. Businesses can integrate multilingual support, enabling AI to cater to global audiences and enhance accessibility.

Healthcare and Research: Prompt engineering assists in summarizing complex medical papers and research findings, making them more digestible for professionals and patients alike. AI-powered analysis aids in drug discovery and medical diagnosis, helping researchers and healthcare providers make data-driven decisions. Additionally, AI automation of repetitive administrative tasks allows medical professionals to focus on higher-level decision-making. AI-driven chatbots can also assist in patient triage by providing initial symptom assessments and guiding individuals toward appropriate medical care. Moreover, AI-generated reports and summaries help doctors streamline documentation, reducing paperwork and enhancing patient care efficiency.

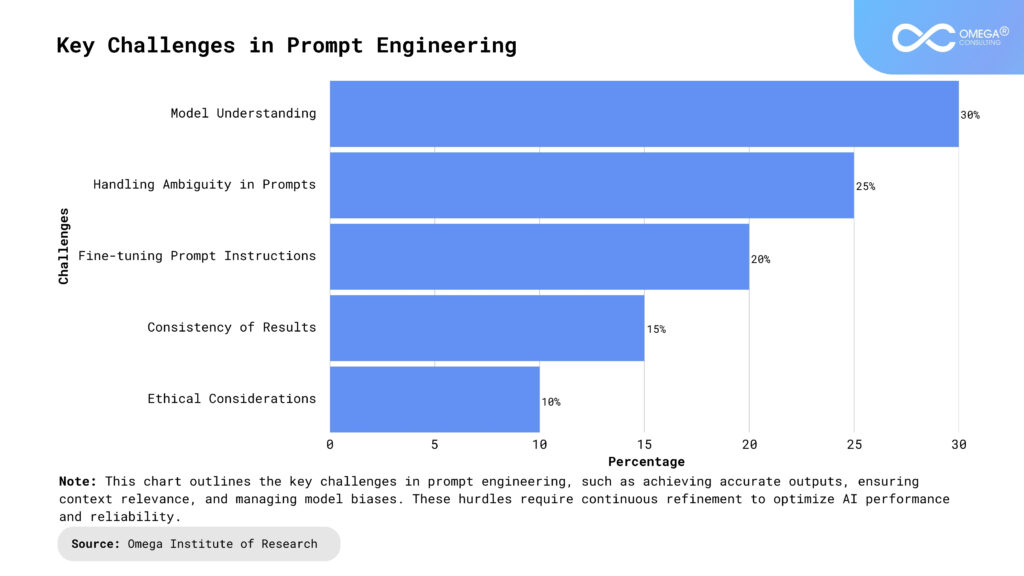

Challenges in Prompt Engineering

Prompt Sensitivity: Minor changes in phrasing can yield vastly different results, making it difficult to predict AI responses reliably. Even slight variations in word choice, sentence structure, or punctuation can lead to significantly different outputs. This unpredictability makes it challenging for users to maintain consistency in AI-generated content across multiple queries. As a result, extensive testing and refinement are often required to achieve the desired response, increasing the time and effort involved in prompt engineering. Advanced prompt-tuning strategies, including iterative refinement and feedback loops, are being explored to address this issue.

Bias and Ethical Considerations: AI models may inherit biases from training data, leading to ethical concerns in prompt design. If prompts are not carefully structured, AI may reinforce stereotypes, generate misleading information, or reflect historical biases present in datasets. This poses challenges in ensuring fairness, inclusivity, and accuracy in AI-generated content. To mitigate these risks, prompt engineers must actively experiment with diverse prompt structures, employ bias detection techniques, and continuously monitor outputs for unintended biases. Ethical AI development frameworks are being integrated into prompt engineering to address these concerns systematically.

Token Limits and Efficiency: Long prompts may exceed model constraints or slow down processing, requiring users to balance brevity with detail. AI models operate within a fixed token limit, meaning that overly detailed prompts can result in truncated outputs or excessive computational load. Striking the right balance between including enough context and maintaining efficiency is crucial. Engineers often need to optimize prompt length, use summarization techniques, or break prompts into multiple steps to avoid performance bottlenecks. The development of AI models with expanded context windows is expected to alleviate some of these limitations in the future.

Generalization Issues: Some AI models struggle with highly specialized prompts, requiring additional refinement or context to generate useful outputs. When dealing with niche topics, AI may provide overly generic responses that lack domain-specific depth. This is particularly problematic in fields like legal, medical, and technical writing, where precision is essential. To address this, users often employ techniques like few-shot learning, structured examples, or supplementary data to improve the model’s comprehension and adaptability. Industry-specific AI models trained on curated datasets are being developed to enhance the accuracy of responses in specialized fields.

Future of Prompt Engineering

Automated Prompt Optimization: AI-driven tools that refine prompts dynamically to improve accuracy. These tools analyze patterns in AI-generated outputs and suggest prompt modifications to enhance relevance and coherence. By leveraging machine learning, automated optimization can reduce trial-and-error in crafting effective prompts, making the process more efficient. In the future, these advancements may enable real-time adaptation of prompts, allowing AI to fine-tune responses based on evolving user needs. Continuous learning mechanisms in AI models will further enhance their ability to optimize prompts autonomously.

Multimodal Prompts: Combining text with images, voice, or video to enhance interactions. Future AI models will likely integrate multiple input formats, allowing users to provide richer context through different media types. For instance, AI could generate descriptions based on images or respond to voice commands with personalized text outputs. This expansion into multimodal capabilities will enable more interactive and immersive AI applications in education, marketing, and creative fields. The convergence of multimodal AI with augmented and virtual reality technologies could further redefine human-AI interaction.

Human-AI Collaboration: Increasing productivity by blending human creativity with AI efficiency. Rather than replacing human input, future prompt engineering will focus on augmenting human capabilities through AI-assisted workflows. This includes co-writing tools, AI-driven brainstorming assistants, and intelligent automation that supports decision-making. By refining prompts collaboratively, users can leverage AI’s computational power while maintaining control over quality and originality. Enhanced explainability features in AI models will help users understand and interpret AI-generated outputs more effectively.

Fine-Tuned Models: Developing models tailored to domain-specific needs for better prompt interpretation. Instead of relying on generalized AI systems, industries will increasingly adopt fine-tuned models trained on specialized datasets. These models will be better equipped to handle technical jargon, legal terminology, or industry-specific nuances. As AI evolves, prompt engineering will shift towards creating adaptive, field-specific models that deliver highly accurate and relevant responses. The emergence of self-improving AI models that continuously adapt to new industry trends will further enhance their effectiveness.

Conclusion

Prompt engineering is a foundational skill for leveraging generative AI effectively. By mastering techniques such as specificity, role assignment, and context provision, users can unlock AI’s full potential across industries. As AI continues to advance, prompt engineering will play a critical role in optimizing machine-human interactions, ensuring more accurate, ethical, and impactful results. The demand for skilled prompt engineers will grow as AI becomes more deeply integrated into business operations, education, and creative industries. Future advancements in AI models will further refine prompt engineering strategies, making interactions more intuitive and efficient. By staying updated with emerging trends and continuously experimenting with new techniques, users can maximize the effectiveness of AI-driven solutions. In this rapidly evolving digital landscape, prompt engineering will remain a key driver of innovation, shaping the future of AI applications worldwide.

- https://www.ibm.com/think/topics/prompt-engineering

- https://aws.amazon.com/what-is/prompt-engineering/

- https://www.deeplearning.ai/short-courses/chatgpt-prompt-engineering-for-developers/

- https://www.datacamp.com/blog/what-is-prompt-engineering-the-future-of-ai-communication

Subscribe

Select topics and stay current with our latest insights

- Functions